Introduction

Over the past decade, companies deploying chatbots have faced a difficult choice. One path is efficiency: automating 80% of inquiries, but ending up with a faceless system that frustrates users with its templated replies. The other path is personalization: hiring live agents who understand emotions and context, but can’t handle thousands of simultaneous requests and require 24/7 staffing at high cost.

Today, that trade-off is losing its relevance. Modern ML chatbots prove that speed and human-like interaction are not mutually exclusive. ML-driven systems can process requests ten times faster than humans while remembering conversation history, adjusting tone, and even anticipating customer needs, which is exactly what Speech-Data.ai was built to enable.

But how exactly does machine learning turn a “dumb menu” bot into a full-fledged assistant? And why are so many companies still not leveraging this technology to its full potential?

What Is an ML Chatbot?

An ML chatbot (machine learning chatbot) is a conversational agent that uses statistical models, data-driven learning methods, and natural language understanding (NLU) to understand and generate human-like language. Unlike traditional scripted bots that follow rigid if-then rules, ML chatbots can:

- Interpret varied user language, including colloquialisms and typos

- Adapt dynamically to context and previous interactions

- Improve performance over time as more training data are accumulated

At a technical level, these systems combine NLU, dialog management, and often speech recognition and text-to-speech layers for spoken conversations. By analyzing patterns in vast amounts of conversation data, they can predict the most relevant response and generate it dynamically.

For example, the phrase:

“I want to book a table for four tomorrow at 7 PM”

would be parsed into:

- Intent: book_restaurant

- Entities: party_size=4, date=tomorrow, time=19:00

This allows the chatbot to respond accurately and efficiently, without human intervention.

How the Model Works

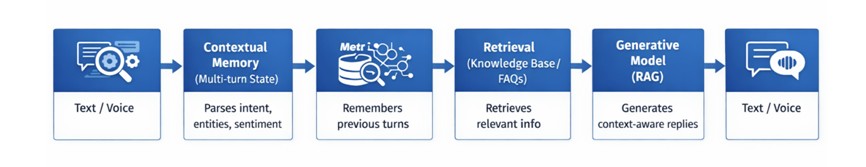

To better understand how an ML chatbot processes and generates responses, it is useful to break down its architecture into sequential functional components.

- User Input – text or voice; the user’s incoming query.

- NLU (Intent & Entity Extraction) – identifies the user’s intent, extracts entities, and detects emotions.

- Contextual Memory – stores the dialogue state, so the bot can handle multi-turn conversations and remember previous steps.

- Retrieval (Knowledge Base / FAQs) – retrieves relevant information from the knowledge base or FAQs, minimizing the risk of “hallucinations.”

- Generative Model (RAG) – generates a natural response by combining retrieval data with the context.

- Bot Response – delivers an accurate, contextually appropriate, and safe reply to the user.

What Sets an ML Chatbot Apart from a Rule-Based System?

Traditional chatbots operate on deterministic rules. Developers write hundreds of “if‑then” statements. The advantage is obvious: behavior is fully predictable, easy to debug, and requires minimal computational power. The downside is fragility. Ask a question like, “Are you open until 9 or 8 on Saturdays?” and the system may fail, because the phrasing does not match any predefined pattern.

An ML chatbot works differently. It doesn’t store millions of rules. Instead, it is trained on thousands of real dialogues and calculates probabilities for each new phrase. Responses are generated dynamically, not from a template: the system constructs a reply that is most likely to satisfy the user in the given context.

The table below highlights key differences:

| Feature | Rule-Based Chatbot | ML Chatbot |

| Operation | Rigid if‑then rules | Probabilistic, data-driven models |

| Understanding Synonyms | Only explicitly coded | Automatic (embeddings) |

| Handling Typos | None or very limited | Built-in (tokenization tolerant to errors) |

| Development Cost | Low at launch | High (data + training) |

| Scaling Cost | Increases linearly (more rules → more work) | Nearly zero after training |

| Predictability | Absolute | Probabilistic (95–99%) |

Unlike rule-based systems, ML chatbots do not rely on millions of static rules. Instead, they are trained on thousands—or even tens of thousands—of real dialogues and generate responses dynamically, choosing the most probable reply for a given context.

Three components ensure smooth operation:

- Natural Language Understanding (NLU): extracts intents, entities, and sometimes sentiment from unstructured text

- Contextual Memory: retains dialogue state, remembering user details, previous selections, and even rejected suggestions

- Hybrid RAG (Retrieval-Augmented Generation) Architecture: combines knowledge-base search with generative models to minimize hallucinations and ensure factual accuracy

This architecture allows ML chatbots to answer complex queries that a static bot would fail to handle.

Efficiency vs. Personalization: Myth or Reality?

For decades, customer service believed scale inevitably reduced quality. The logic seemed solid: the more requests an operator handles, the less time is available per customer. Traditional chatbots reinforced this stereotype—they were fast but impersonal, often limited to inserting the user’s name into a canned reply.

Modern ML chatbots demonstrate that efficiency and personalization are different dimensions, not opposites.

Mechanisms enabling this include:

- Asynchronous processing: ML models can handle thousands of simultaneous requests without performance degradation

- Scaling empathy: training on annotated dialogues allows recognition of real-time user emotions and selection of an appropriate tone

- Dynamic personalization: ML bots build behavioral profiles automatically, allowing for context-sensitive offers, reminders, or follow-ups

For instance, a retail ML chatbot can:

- Recommend products based on a user’s browsing and purchase history

- Recognize frustration or confusion in the user’s language and escalate to a human agent if necessary

- Adapt communication style to match a user’s preferred tone, e.g., formal vs. casual

Key metrics comparison is presented below:

| Metric | Live Agent | Rule-Based Bot | ML Chatbot |

| Average Response Time (sec) | 45–120 | 0.3–1 | 0.5–2 |

| 24/7 Availability | No (shift-based) | Yes | Yes |

| Emotion Recognition | High | None | Medium (improves with data) |

| Cost per 1,000 Dialogues | $200–500 | $5–15 | $10–30 |

| Context Retention | Full | None | Partial (5–10 turns) |

| Max Parallel Dialogues | 1–3 per agent | Thousands | Thousands |

Gartner (2025), “Hype Cycle for Artificial Intelligence: By 2027, ML-based agent chatbots will replace 30% of frontline B2C support operators.

Pitfalls: Why Many Projects Fail

The most common answer to “Why isn’t our chatbot working?” is the same across startups and corporations: “We gave the model poor data.” An ML chatbot is essentially a distillation of the dialogues it was trained on. If the training set lacks examples of angry customers, the bot won’t learn how to respond appropriately. If all dialogues are perfectly grammatical, the bot won’t understand natural speech that contains slips and informal phrasing.

Common data issues and consequences are summarized below:

| Data Issue | Effect on Bot Performance | Solution |

| Class Imbalance (80% complaints, 5% questions) | Bot interprets every other message as a complaint | Collect additional data, use augmentation |

| Lack of typo examples | Bot cannot parse “grafik roboty” instead of “raboty” | Add synthetic typos |

| No emotion annotation | Bot replies neutrally to anger | Professional annotation |

| Too few dialogues (<1000) | Low accuracy on new topics | Collect production data |

| Text only (no voice) | Bot fails on audio messages | Add transcribed voice data |

A high-quality dataset for a production ML chatbot isn’t 100–200 dialogues, but tens of thousands. Each dialogue must be transcribed (if voice-based), labeled for intents, entities, and emotional markers. Manual preparation can take months. Automation is difficult, since automated labeling requires pre-trained models.

One Technology, Many Faces

A chatbot in e-commerce and a chatbot in a medical clinic serve entirely different purposes. In the first case, a customer wants to quickly find a product. In the second, they want to describe symptoms without receiving dangerous advice. There is no universal ML chatbot. However, proven patterns exist for each industry vertical.

Below is a ranked list of industries by the severity of potential errors: from least to most critical.

1. E-commerce & Retail

Key intents: product search, comparison, returns.

Critical error: recommending the wrong size or incompatible accessory.

Priority: speed and accuracy.

According to a Forrester Consulting report (2024), ML chatbots in e-commerce reduce returns by 18% thanks to precise recommendations.

2. Telecommunications

Key intents: plan changes, checking balances, service cancellations.

Critical error: activating a paid service without explicit consent.

Priority: transparency.

The bot should clearly indicate the basis of its answers (linking to the contract or tariff).

3. Education & EdTech

Key intents: schedules, homework, grades.

Critical error: losing student progress or confusing users.

Priority: context and long-term memory.

The bot should retain conversation history even after a two-week gap.

4. Banking & Finance

Key intents: balance inquiries, transfers, card blocking.

Critical error: exposing someone else’s account data or sending funds to the wrong recipient.

Priority: security.

Gartner research (2025) shows that ML chatbots in banking reduce false card blocks by 23%, distinguishing fraud from normal customer activity more accurately.

5. Healthcare & Medicine

Key intents: scheduling appointments, interpreting tests, describing symptoms.

Critical error: giving direct medical advice.

Even a 1% error can be life-threatening.

Priority: caution and mandatory disclaimer. Every medical response must include:

“I am an AI, not a doctor. Please consult a medical professional.”

Key Takeaways for ML Chatbot Deployment

- Prioritize High-Quality Data – ML bots learn from examples, not rules. Include varied phrasing, typos, emotions, and domain-specific cases.

- Context & Vertical Knowledge Matter – Maintain multi-turn dialogue states and integrate domain ontologies or knowledge graphs to improve intent recognition and entity extraction.

- Hybrid Architectures Reduce Hallucinations – Combine Retrieval-Augmented Generation (RAG) with generative models to ground responses in verified data, enabling safe answers in high-risk domains like healthcare and finance.

- Monitor Key Metrics – Track latency, context retention, intent accuracy, sentiment recognition, and escalation rates. Logging raw inputs and confidence scores ensures auditability and model retraining.

- Iterative, Vertical-Specific Deployment – Pilot in one domain, collect production data, fine-tune the model, then expand. Use A/B testing and feedback loops to optimize performance.

- Security & Compliance by Design – Apply encryption, mask PII, and maintain audit trails for regulatory compliance (HIPAA, GDPR, PCI DSS).

- Continuous Learning – Capture flagged interactions, retrain periodically, and integrate agent feedback to keep the chatbot adaptive to evolving language and customer behavior.

Bottom line: Success depends on data quality, architecture robustness, monitoring, and continuous improvement. With these in place, ML chatbots can deliver scalable, personalized, and secure customer interactions.

Conclusion

Businesses no longer have to choose between the speed of a robot and the warmth of a human. The modern chatbot ml is a bridge that connects these two shores. It responds instantly, but not with templates. It scales to millions of conversations, yet handles each one as if the customer were the only one.

Of course, the magic doesn’t happen by itself. Behind every smart bot lies an architecture of NLU, memory, RAG pipelines — and thousands of hours of data annotation.

Beginners and solo enthusiasts should remember that a simple ML chatbot can be launched in 2–3 months with just a laptop and open data. But for an industrial-grade solution that delivers ROI and delights customers, you will need to invest in data and architecture. And that’s perfectly normal. That’s how any serious ML system works.

The gap between efficiency and personalization is closing. The question is not whether you can bridge it. The question is whether you will do it before your competitors do.